Ship AI generated models that you can actually trust.

Built by the founders of scikit-learn, Skore is the pre-MLOps platform that helps data science teams to follow good practices, ensure reproducibility, and chose the best model for production.

Sound familiar?

You're not the only data team dealing with this.

Meet Skore.

The data science platform that brings structure, collaboration, and trust to your ML workflow, without leaving your notebook.

pip install skore

Start locally

Your personal ML assistant inside the notebook. Validates pipelines, selects the right metrics, detects data leakage, and suggests best practices, powered by scikit-learn's own methodology.

Automated cross-validation reports

Data leakage detection

Smart metric selection

Easy model comparison

Works with any scikit-learn compatible estimator

Free forever

Works offline

No account needed

skore.probabl.ai

Share it remotely

Your team's shared experiment library in the cloud. Store, compare, and annotate models together, one single source of truth that survives team changes and project pivots.

Shared experiment library (browse, compare, pick)

Auto-generated model cards & documentation

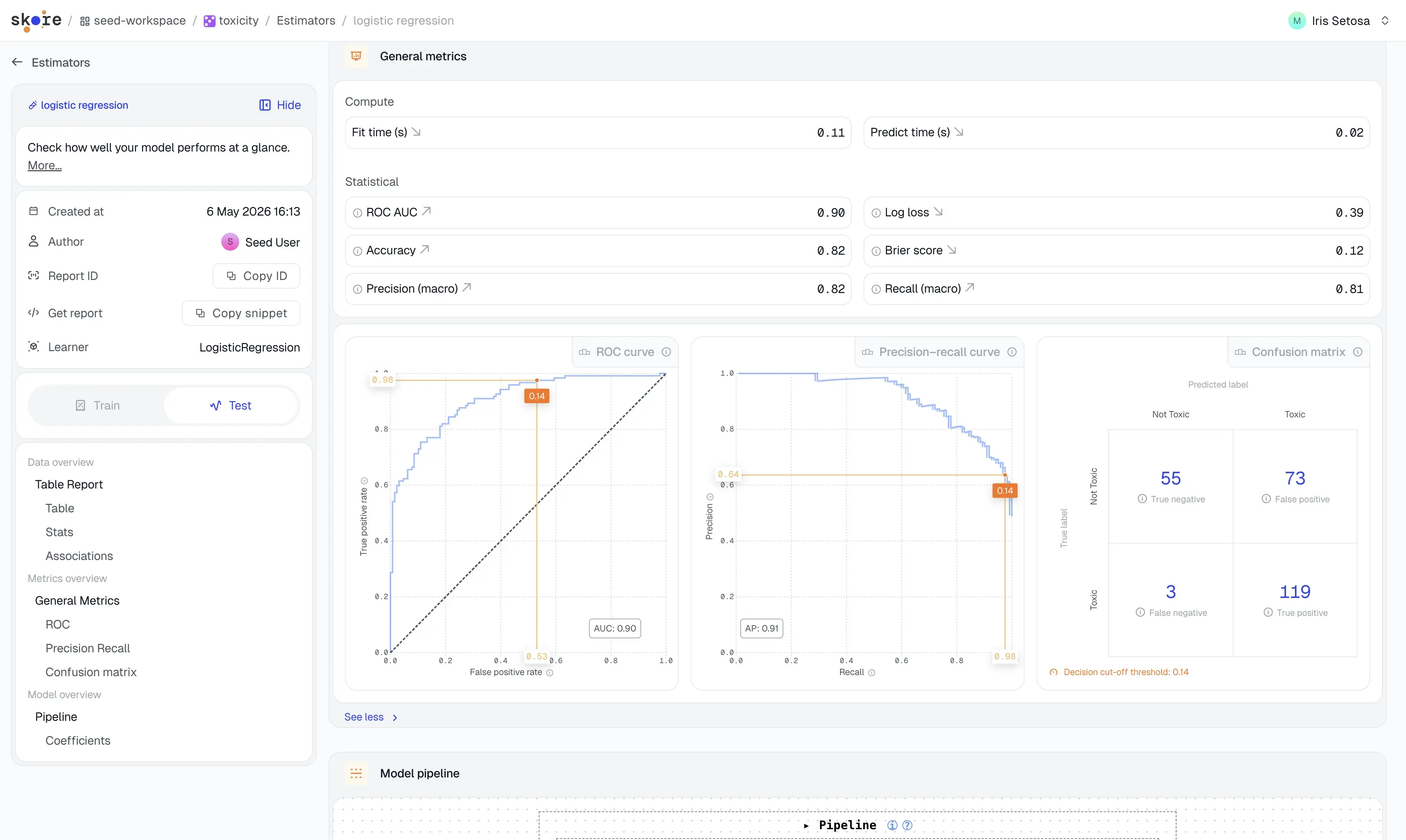

Visual reports for non-technical stakeholders

Team activity feed & comments

Governance & compliance ready (EU AI Act)

Free for 1 user

Team plan starts at $1,750/mo

Built for the way you actually work.

AI Agents

Your AI writes the code.

Skore makes sure it works.

AI coding tools generate scikit-learn pipelines in seconds. Skore validates them ; detecting data leaks, selecting the right metrics, and flagging silent errors before they reach production.

Automatic pipeline validation (structure, leakage, overfitting)

Smart metric recommendations based on your use case

Works with any genAI agents

Team

Compare, share, decide, as a team.

The platform Skore gives your team a place to share experiments. Browse, compare, and pick the best model together, instead of working in silos with duplicate notebooks. Nothing is lost when someone leaves.

Shared experiment storage with version history

Side-by-side model comparison with visual diff

Comments, approvals, and activity feed

Data × Business

Make your work speak business.

Skore auto-generates model cards, documentation, and visual reports tailored to your domain, in terms your stakeholders actually understand. Less time justifying, more time building.

Auto-generated model cards (purpose, bias risk, audit status)

Export to PDF, share with non-technical stakeholders

EU AI Act compliance-ready documentation

90 seconds. Full workflow.

From pip install to team comparison, watch Skore in action.

Compatible with :

MLflow

DVC

Jupyter

VS Code

Docker

Kubernetes

AWS

GCP

Azure

On-premise

MLflow

DVC

Jupyter

VS Code

Docker

Kubernetes

AWS

GCP

Azure

On-premise

They are talking about us

Start free.

Scale when you're ready.

No hidden fees. No credit card required. Upgrade only when your team needs it.

Free

Run experiments locally or remotely — no setup, no commitment

€0 forever

Get startedPython OSS library:

Create structured evaluation reports

Track your experiments locally and remotely

Skore Hub:

1 user

1 workspace

3 projects

Basic recommendations & guidance

Intuitive exploration through experiments

Integrations:

MLflow compatibility

Deployment:

SaaS

Python OSS library:

Create structured evaluation reports

Track your experiments locally and remotely

Skore Hub:

1 user

1 workspace

3 projects

Basic recommendations & guidance

Intuitive exploration through experiments

Integrations:

MLflow compatibility

Deployment:

SaaS

Team

Share insights, align stakeholders, and deploy on your own infrastructure

€/$1,950 /month - 5 first users incl.

Python OSS library:

Create structured evaluation reports

Track your experiments locally and remotely

Skore Hub:

5 users included

1 workspace

Unlimited projects

Advanced recommendations & guidance

Intuitive exploration through experiments

Integrations:

MLflow compatibility

Deployment:

SaaS, Private Cloud

Collaboration:

Shared project reports — push & pull

Cross-project report search

Stakeholder & domain expert access

Model cards export

Versioned presentations & notes

Workspace & project permission management

Python OSS library:

Create structured evaluation reports

Track your experiments locally and remotely

Skore Hub:

5 users included

1 workspace

Unlimited projects

Advanced recommendations & guidance

Intuitive exploration through experiments

Integrations:

MLflow compatibility

Deployment:

SaaS, Private Cloud

Collaboration:

Shared project reports — push & pull

Cross-project report search

Stakeholder & domain expert access

Model cards export

Versioned presentations & notes

Workspace & project permission management

Collaboration

Enterprise

Full control over deployment, data, and governance — at any scale

Custom

Contact SalesPython OSS library:

Create structured evaluation reports

Track your experiments locally and remotely

Skore Hub:

Unlimited users

Unlimited workspaces

Unlimited projects

Advanced recommendations & guidance

Intuitive exploration through experiments

Integrations:

MLflow compatibility

Deployment:

SaaS, Private Cloud, or On-Premises

Collaboration:

Shared project reports — push & pull

Cross-project report search

Stakeholder & domain expert access

Model cards export

Versioned presentations & notes

Workspace & project permission management

Python OSS library:

Create structured evaluation reports

Track your experiments locally and remotely

Skore Hub:

Unlimited users

Unlimited workspaces

Unlimited projects

Advanced recommendations & guidance

Intuitive exploration through experiments

Integrations:

MLflow compatibility

Deployment:

SaaS, Private Cloud, or On-Premises

Collaboration:

Shared project reports — push & pull

Cross-project report search

Stakeholder & domain expert access

Model cards export

Versioned presentations & notes

Workspace & project permission management

.svg)